WHAT IS SEO?

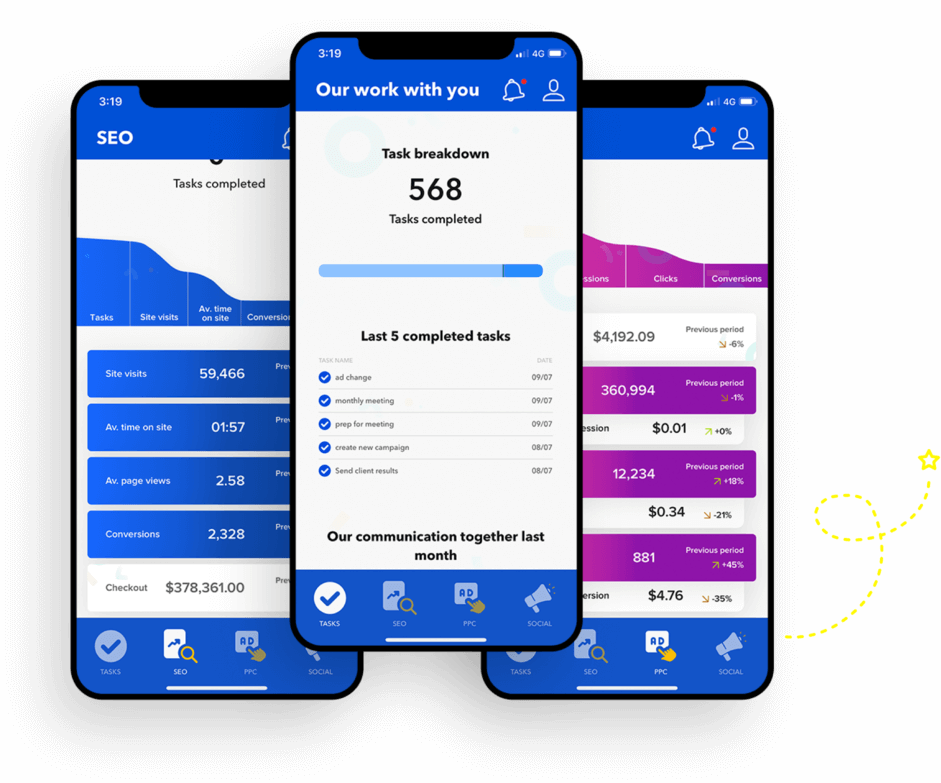

Any SEO specialist worth their salt starts their SEO strategy with an audit. Once you come on board with OMG, we’ll get to know you and your business before conducting a thorough SEO audit of your website. We’ll analyze everything from your website’s layout to its copy and landing pages to determine where it stands against your competitors.

Our Gurus will then recommend how to take your website to the next level through various SEO solutions. We’ll identify quick wins, long-term opportunities, and more — anything that will get your website to the top of Google’s rankings.

One of the easiest and biggest ways to win online with SEO marketing is through keywords. These refer to the words your existing and potential customers use to search for products and services within your industry. Our job is to find your business’ most profitable and relevant keywords.

To do that, we’ll first need to understand what the average customer looks like for your business and how they behave online. We’ll consider factors such as the customer journey, search platform, and more to maximize your website’s visibility wherever possible.

It’s a known fact that users drift toward sites with exceptional content. From informative blog posts to dazzling product descriptions and detailed FAQs, you need high-quality copy crafted to attract and retain customers. Our talented team of copywriters will produce unique and engaging content that strikes the perfect balance between search engine optimization and content that bring your brand to life.

They’ll tell stories, inform your customers about your business, and showcase your unique product offerings in a light that’s hard to resist.

Link building is all about building your business’ domain authority to improve your online rankings. It refers to the process of getting high-ranking and credible websites to link to yours to boost your own credibility. Think of it as a helpful recommendation from a friend.

Our SEO specialists will focus on building a catalog of high-quality links that not only give your site the ranking boost it deserves to attract new customers but are also relevant to your current audience, giving them a leg up as well.

Premium SEO strategies not only consider the elements a user sees on a page but also what goes on under the hood. Technical SEO is the process of improving your website’s accessibility, navigation, and crawlability for users and search engines. Depending on your site needs, this could include everything from improving page speed to implementing schema, setting up SSL, amending 404 errors, updating your XML sitemap, and tweaking your internal linking structure.

Users rely on local and nearby businesses more and more every day, which means your business has the opportunity to tap into these searches. We are a local SEO agency that can help you do that. For example, establishing and optimizing your Google My Business profile can give your business a captivating online presence. Our SEO specialists will also rely on keywords and other SEO elements to improve your local visibility.

There are many reasons a business should seriously consider including SEO services as part of its marketing efforts. The majority of online experiences begin with a search engine. By implementing effective SEO solutions, businesses can increase their visibility and exposure to potential customers actively searching for products, services, or information related to their industry.

Additionally, SEO helps attract highly targeted traffic to a website. By optimizing for specific keywords and phrases relevant to their business, companies can reach users more likely to be interested in their offerings, resulting in better conversion rates and higher chances of generating leads or making sales.

SEO involves optimizing various website elements, such as site structure, navigation, page speed, and mobile-friendliness. These optimizations help search engines understand and rank the site better and enhance the user experience. A user-friendly website with easy navigation and fast loading times can lead to higher engagement, reduced bounce rates, and increased conversions.

Effective SEO services can also positively contribute to your business’ online reputation. High-ranking websites are often perceived as more trustworthy and credible by users. When a website appears on the first page of search results, it signals to users that the search engine considers it relevant and authoritative, leading to increased trust and credibility for the business.

In today’s digital landscape, businesses face fierce competition. Investing in SEO solutions can give them a competitive edge by helping their website rank higher than their competitors’ websites in search results.